Blog

Company Updates & Technology Articles

August 18, 2025

Why I Joined Scale – Christopher Kirchhoff

Agentic AI is the most exciting breakthrough Scale is pioneering. As a world leader in Agentic AI, Scale is putting AI agents to work that operate autonomously and are tireless in their ability to perform complex tasks.

Read more

August 13, 2025

Training the Next Generation of Enterprise Agents: Scale's Research in Reinforcement Learning

Language models provide impressive general capabilities off the shelf, but because they were not trained on private enterprise data they fall short of delivering the specialized performance enterprises need for their unique workflows, internal systems, and proprietary data. At Scale, our Safety, Evaluations and Alignment Lab (SEAL) recently published foundational reinforcement learning research, and our enterprise team is pioneering new approaches to solve this challenge through cutting-edge reinforcement learning research focused on training AI agents specifically for enterprise environments.

Read more

August 4, 2025

New Benchmarks Envision the Future of AI in Healthcare

A fundamental shift is underway in how AI for healthcare is evaluated. Recent studies from OpenAI, Google, and Microsoft move beyond simple accuracy scores to establish a new standard for measuring an AI's healthcare skills. This post provides an analysis of three distinct evaluation methodologies that redefine what "good" looks like for clinical AI. We explore how OpenAI's HealthBench uses a massive, rubric-based system to measure foundational safety; how Google's AMIE tests the nuanced, "soft skills" of an interactive diagnostic dialogue; and how Microsoft's SDBench validates an agent's ability to make strategic, cost-conscious decisions. By examining these benchmarks and their results, we provide a glance at the future of AI in healthcare.

Read more

August 4, 2025

The AI Risk Matrix: Evolving AI Safety and Security for Today

The shift from reactive models to agentic systems fundamentally alters the AI risk landscape, making frameworks that focus only on user intent and model output incomplete. To address this gap, we've evolved the AI Risk Matrix by adding a crucial third dimension: Model Agency. This article breaks down agency into three tiers—Tools, Agents, and Collectives—using concrete examples to illustrate how complex failures can now emerge from the system itself. We argue that this systemic view, which connects model behavior to traditional AppSec vulnerabilities, is essential for building the next generation of safe and reliable AI.

Read more

July 31, 2025

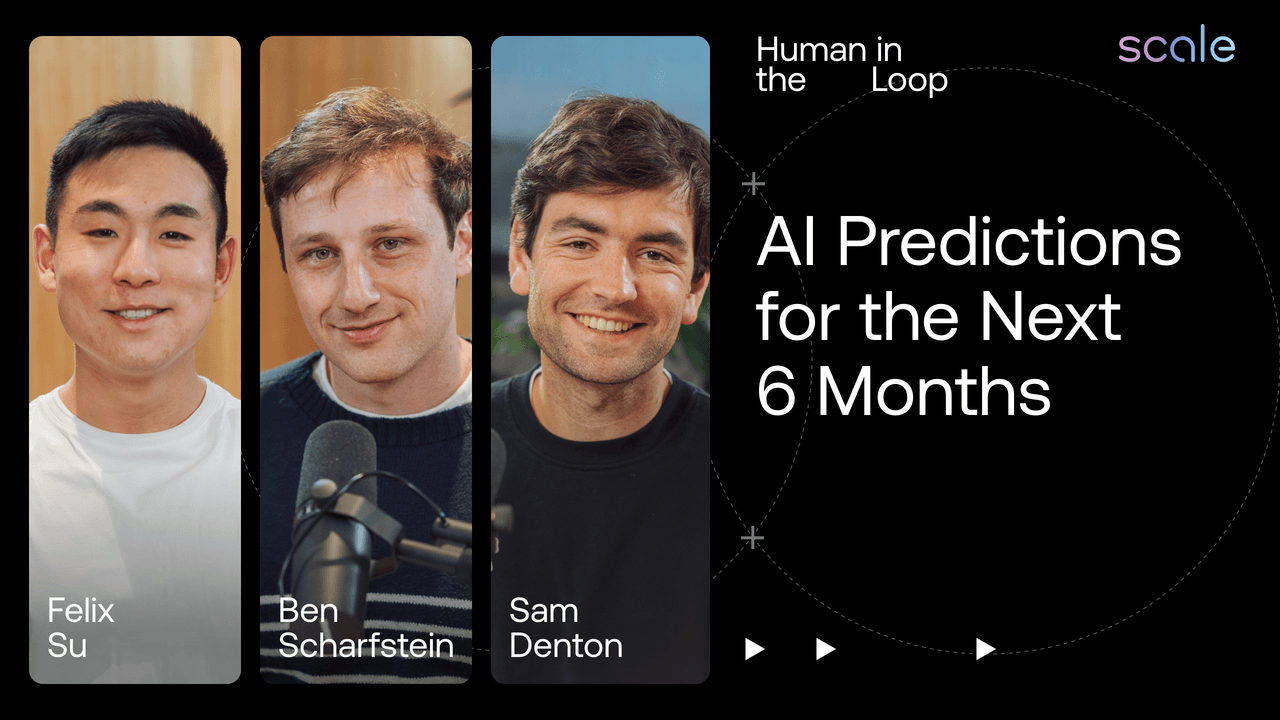

We try to predict the next 6 months in AI | Human in the Loop: Episode 11

We peer into our crystal ball and try to predict the next 6 months in enterprise AI. Are you surprised by any of these predictions?

Read more

July 28, 2025

Building Autoraters for Expert-Level Reasoning Data

Scale AI is one of the world’s leading suppliers of high-quality foundation model training data. In recent months we’ve seen a marked increase in demand for PhD-level, multimodal reasoning data across a multitude of domains, including math, coding, science, and humanities. Because of the ever-increasing difficulty of such expert-level data, ScaleAI invests in frontier research on quality control (QC) with LLM agents, also known as “autoraters.”

Read more

July 25, 2025

The U.S. AI Action Plan: A Welcome Step Toward American Leadership in AI

The release of the U.S. AI Action Plan is a major step toward solidifying America’s leadership in artificial intelligence. At Scale, we’re proud to support its priorities—from promoting U.S. AI globally, to expanding its use in government, to investing in innovation and infrastructure. Here’s our perspective on what this means for the future of AI.

Read more

July 24, 2025

We argued about recent AI headlines | Human in the Loop: Episode 10

Our Enterprise team breaks down recent AI developments and explores what (if anything) it means for the enterprise world.

Read more

July 23, 2025

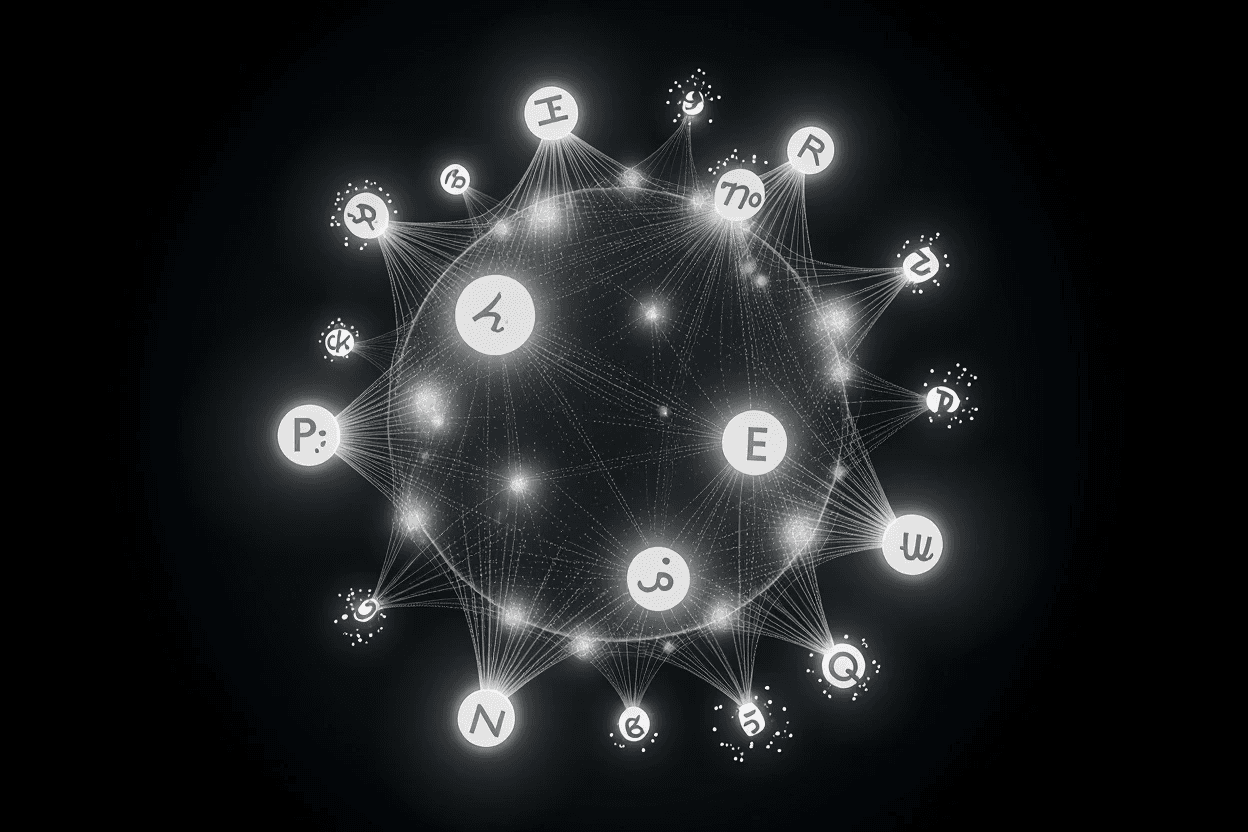

The Future is Multilingual: Scale's New Evaluation Benchmark

Building truly intelligent and equitable multilingual AI requires a new way to measure cultural reasoning. Scale's new Multilingual Native Reasoning Challenge (MultiNRC) is designed to do just that. Created from scratch by native speakers, this benchmark tests for deep linguistic and cultural understanding beyond simple translation, providing a clear path for the AI community to accelerate progress.

Read more

July 23, 2025

WebGuard: A Guardrail for the Agentic Age

As AI agents become more powerful, ensuring their safety is the most critical challenge for deployment. This post explores WebGuard, a new benchmark from researchers at Scale, UC Berkeley, and The Ohio State University that reveals a significant safety gap in current models. Learn how high-quality, human-in-the-loop data provides a path forward, dramatically improving a model's ability to avoid risky behavior.

Read more